The current corporate discourse surrounding Artificial Intelligence is dangerously skewed toward application-layer deployment. Boards and executive teams are aggressively mandating the procurement of conversational agents, copilots, and generative wrappers to plug into existing workflows. This is a critical architectural error. Installing a high-performance neural engine into a legacy data chassis does not yield a faster vehicle; it accelerates systemic structural failure.

This is where many AI for enterprise initiatives fail, not because of lack of ambition, but due to the absence of a coherent enterprise AI strategy grounded in infrastructure realities.

True enterprise AI readiness is not a procurement milestone. It is a fundamental refactoring of an organization’s data infrastructure, cloud economics, and security topography.

For executives evaluating enterprise AI solutions, assessing readiness requires abandoning surface-level metrics (like how many employees have access to an LLM) and conducting a rigorous, first-principles audit across four systemic pillars: Semantic Data Architecture, Compute Economics (FinOps), Deterministic Security, and Organizational Topology.

Pillar 1: The Shift to Semantic Data Architecture in an Enterprise AI Readiness Framework

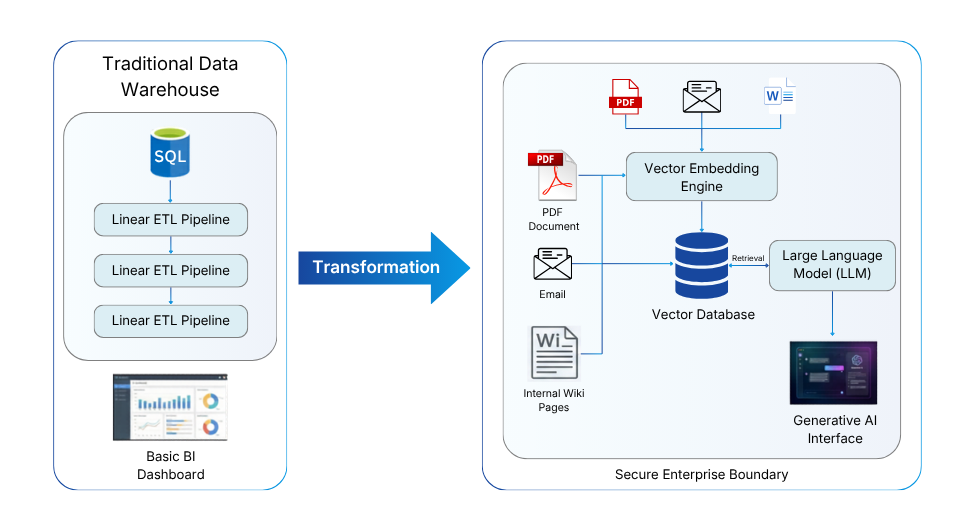

Historically, enterprise data readiness meant achieving structured, clean data within relational databases (SQL) or centralized data warehouses. AI invalidates this baseline. Large Language Models (LLMs) and advanced neural networks do not process data linearly or relationally; they process it semantically, mapping multi-dimensional relationships across vast, unstructured datasets.

To be AI-ready, an enterprise must transition from purely relational storage to an architecture capable of supporting Retrieval-Augmented Generation (RAG). This is not optional; it is the core mechanic that prevents an AI from hallucinating and is foundational to any enterprise AI readiness framework.

- Vectorization of Proprietary Knowledge: Executives must ask a fundamental question: Has our unstructured data (PDFs, internal wikis, email repositories, customer logs, and legacy code) been converted into vector embeddings? An LLM cannot natively “read” a PDF. The data must be passed through an embedding model, converted into high-dimensional mathematical coordinates, and stored in a dedicated Vector Database.

Without this layer, even the best AI for enterprise use cases will fail to deliver contextual accuracy.

- The Fine-Tuning Fallacy: Many organizations assume they must spend millions of dollars fine-tuning foundational models with their data. A mature enterprise AI strategy recognizes that a robust RAG architecture allows you to use off-the-shelf, generalized models grounded strictly in your retrieved vector data. This drastically reduces time-to-market, cuts compute costs, and entirely eliminates the risk of “model drift” (where a fine-tuned model slowly degrades over time).

- Data Latency and Event Streaming: Predictive machine learning models require historical data; generative AI requires real-time context. If your data pipeline relies on nightly batch-processing rather than real-time event streaming (e.g., Apache Kafka), your AI outputs will be inherently deprecated the exact moment they are generated.

This is precisely why modern AI first data engineering practices are becoming a prerequisite for scalable enterprise AI platforms.

Pillar 2: AI FinOps and Compute Economics for Scalable Enterprise AI Solutions

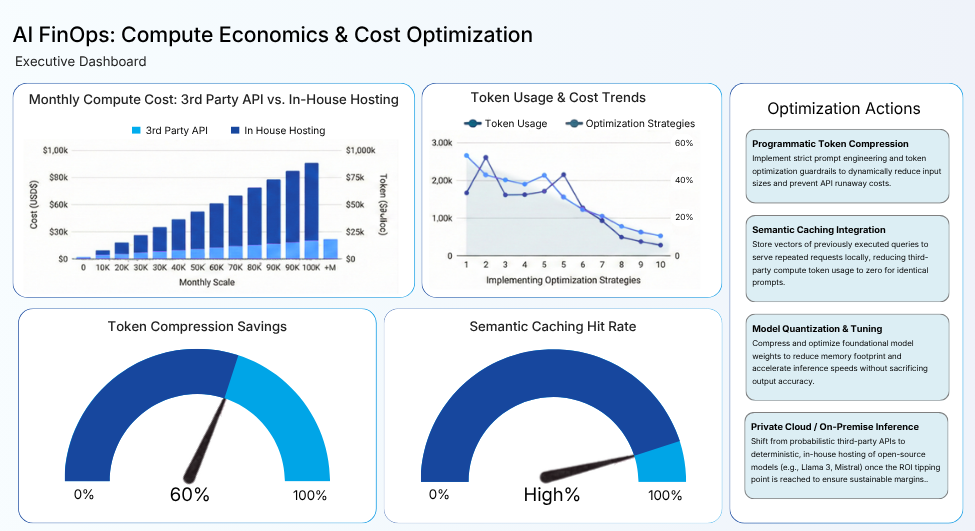

The integration of generative AI introduces a volatile, highly unpredictable variable into enterprise cloud economics: Probabilistic Compute. Traditional software executes deterministic code, meaning the compute cost per transaction is fixed, measurable, and highly predictable. LLMs, however, operate on token-based economics.

The input prompt size and the generated output length dictate the API cost dynamically, creating the risk of massive financial bloat. Thus the shift is central to understanding how AI is used in enterprises, not just as a capability layer, but as a cost center that must be engineered with precision.

Executive readiness requires the immediate implementation of strict AI FinOps (Financial Operations). You cannot scale AI for enterprise if you cannot predict its burn rate.

- Token Optimization Strategies: Without guardrails, a poorly optimized internal AI tool can trigger API runaway costs. Are your engineering teams trained in programmatic token compression? More importantly, has the enterprise implemented Semantic Caching?

If 500 employees ask an internal HR bot about the holiday policy, the system should not send 500 identical compute requests to OpenAI or Anthropic. A semantic cache stores the vector of the first question and its answer; the next 499 queries hit the cache, costing the enterprise exactly zero compute tokens.

A semantic cache ensures that repeated queries do not repeatedly incur costs, an essential capability for production-grade enterprise AI solutions.

- The On-Premise Tipping Point: As enterprise reliance on AI scales from hundreds of queries a day to millions, relying solely on third-party APIs becomes mathematically unsustainable.

At this stage, organizations evaluating enterprise AI platforms must determine when shifting to self-hosted or hybrid infrastructure becomes economically viable.

Pillar 3: Deterministic Security and Data Ring-Fencing

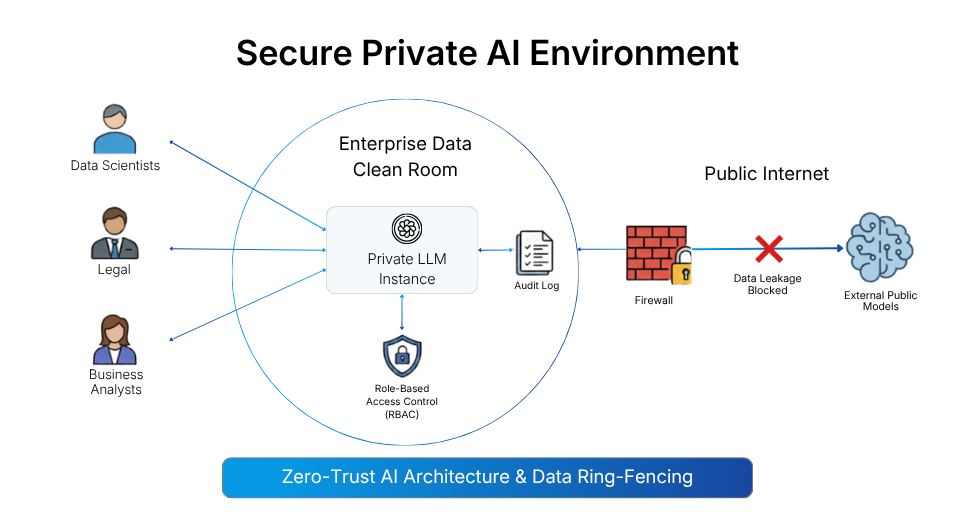

The most common and justified executive fear regarding AI adoption is the unintentional leakage of intellectual property or Personally Identifiable Information (PII) into a public model. AI readiness requires moving from a reactive cybersecurity posture to a deterministic, zero-trust one.

Enterprise AI requires enterprise-grade armor. You cannot rely on employees to “remember” not to paste sensitive data into public tools. This is a defining checkpoint in achieving true enterprise AI readiness.

- Data Ring-Fencing and Clean Rooms: An AI-ready enterprise implements a “data clean room” architecture. This means deploying private models within a Virtual Private Cloud (VPC), ensuring complete isolation from public endpoints as it is critical for regulated AI, for enterprise deployments.

- Vector-Level Role-Based Access Control (RBAC): Security must be applied at the embedding level. The RAG architecture must inherit IAM protocols, ensuring controlled access, an essential feature in enterprise-grade enterprise AI platforms.

- Algorithmic Auditing: As global AI regulations tighten, your models must remain fully auditable. This is non-negotiable for industries where enterprise AI solutions directly influence financial or clinical outcomes.

Pillar 4: Organizational Topology and the AI CoE in an Enterprise AI Strategy

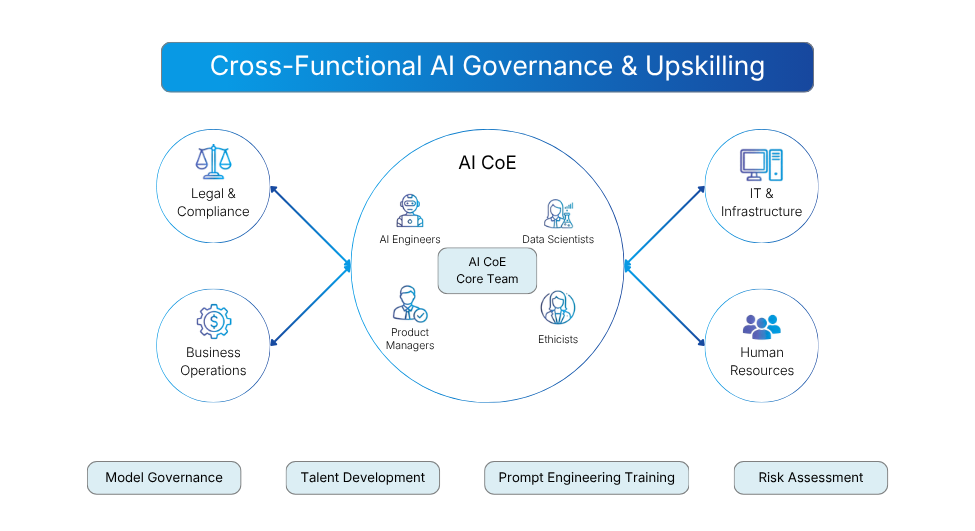

The final, and often most difficult, pillar of readiness is human infrastructure. AI is a horizontal, cross-functional discipline that cannot be isolated within IT or engineering departments.

An AI-ready organization must restructure itself around a unified enterprise AI strategy, anchored by an AI Center of Excellence (CoE).

- Moving Beyond IT Support: This CoE is not a helpdesk. It is an operational hub combining engineering, compliance, product, and ethics, ensuring that AI for enterprise is deployed responsibly and at scale.

- Systemic AI Literacy: You cannot simply hire your way to AI readiness. Organizations must train their workforce to understand how AI is used in enterprises, including prompt design, output validation, and workflow transformation.

- Establishing Model Governance: The CoE defines the ethical and operational guardrails. Before deployment, CoEs must evaluate risk, accountability, and acceptable failure thresholds, core principles of any sustainable enterprise AI readiness framework.

The Path Forward: From Adoption to True Enterprise AI Readiness

Becoming AI-ready is not a software installation; it is an ongoing state of operational vigilance. It requires treating your entire organization, its data, its cloud budget, its security protocols, and its people, as one integrated system.

The executives who will lead in the era of enterprise AI solutions are not those who adopt tools the fastest, but those who build the strongest foundations.

Because in the end, the question is not whether you are using AI. The real question is whether your architecture, economics, and organization are prepared to scale AI for enterprise without breaking.