The era of static prediction models is long gone. Enterprises are no longer content with models that score, classify, or predict in isolation. The frontier today is agentic AI—autonomous, goal-driven systems that plan, act, use tools, and adapt over time. These systems are being designed not just to answer questions, but to accelerate research, drive workflows, and fuel growth.

As enterprises scale into this new paradigm, the stakes rise. Architectures must handle real-time data ecosystems, prepare for quantum-ready infrastructures, and enforce privacy-preserving computation across sensitive workflows. And the platform of choice must be as unified as it is extensible. This is where Databricks Mosaic AI offers both vision and execution. This is where Databricks Mosaic AI offers both vision and execution.

Databricks as the Platform for Agentic AI

Databricks provides a unified data + AI stack—a critical foundation for building enterprise AI agents that are not only powerful, but also governed, observable, and composable.

Key enablers include:

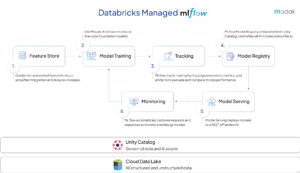

- Unity Catalog: Fine-grained governance, security, and lineage across data and AI assets. This means that agents can access the data they need, while enterprises retain strict oversight and control.

- MLflow: Lifecycle management, observability, and tracing for models and agents- enables logging, tracing, and monitoring of every inference call, prompt, and tool invocation turning opaque AI into observable, auditable workflows.

- Mosaic AI: Extends the Lakehouse into a foundation for agentic systems, with Databricks foundation models, vector search, and evaluation frameworks built natively into the platform.

Together, these components allow enterprises to design agentic systems not as fragile proofs-of-concept, but as production-grade architectures aligned with the Databricks Agentic AI Framework.

Core Building Blocks of Agentic Systems

Agentic systems are only as effective as the reliability of their underlying components—retrieval, reasoning, orchestration, memory, and monitoring. Databricks deliver these as native, modular, yet unified services, tightly integrated with the Lakehouse and Unity Catalog.

1. Mosaic AI: Vector Search

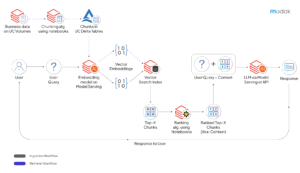

At the core of any context-aware agent is its ability to retrieve relevant knowledge. Databricks vector search is a semantic search layer that transforms static enterprise data into real-time, queryable memory.

- Indexing: Embed documents, structured tables, and unstructured files (PDFs, tickets, transcripts) using embedding models such as BGE, OSS models, or Databricks foundation models like DBRX. Store these embeddings in Unity Catalog–managed indexes for governance and lifecycle control.

- Querying: Encode incoming queries and retrieve top-k documents using similarity metrics (cosine, dot product). Results can be reranked or fused with keyword search for hybrid retrieval.

- Fine-Grained Security: Every index is tied to Unity Catalog ACLs, ensuring that an HR agent cannot access financial records, or that customer data remains tenant isolated.

- Integration: Vector search results can be programmatically injected into prompts from Databricks notebooks, MLflow deployments, or Workflows, supporting end-to-end Retrieval-Augmented Generation (RAG) pipelines.

This component ensures that agents are never answering in isolation, but always grounding responses in fresh, governed enterprise knowledge powered by Databricks Mosaic AI.

2. Foundation Models for Cognition

2. Foundation Models for Cognition

Reasoning and planning are central to agentic intelligence. Databricks hosts a range of foundation models, from the proprietary DBRX to OSS models like LLaMA 2, exposed via managed REST endpoints and deeply integrated with MLflow — forming a robust ecosystem of Databricks foundation models.

- Hosted Models: Call DBRX or OSS models through mlflow.deployments.get_deploy_client() for seamless inference.

- Prompt Engineering: Define reusable templates that combine system instructions, user queries, and retrieved context. Templates can be versioned and tracked via MLflow.

- Multi-turn Support: Use Delta tables or MLflow memory components to persist in conversational state across interactions, enabling continuity in reasoning.

- Streaming Inference: For interactive agents, invoke streaming endpoints to deliver partial outputs token-by-token, reducing latency in user-facing scenarios.

- Fine-Tuning: Apply domain-specific fine-tuning to align foundation models with enterprise requirements (e.g., regulatory compliance, domain vocabulary, brand tone).

These models function as the cognitive core—interpreting intent, planning multi-step actions, and synthesizing outputs from retrieved knowledge and tool results.

3. Decision Making and Orchestration

Agents must act, not just think. Mosaic AI integrates with Databricks Workflows to orchestrate tool use and multi-step decision-making across enterprise systems. This orchestration layer is foundational in agentic AI using Databricks Azure Databricks deployments.

- Function Registry: Register Python functions or SQL commands in Unity Catalog as reusable, schema-validated tools. For example, fetch_invoice or query_errors.

- Workflow Tasks: Define orchestrated flows such as: API call > validate thresholds > summarize results > write to Delta. Each step is observable and logged.

- Dynamic Tool Calling: Support agentic orchestration loops like ReAct, planner-executor, or multi-agent protocols (LangChain, LangGraph, CrewAI). Agents can dynamically select tools based on their current reasoning state.

- External Integrations: Call out to third-party systems such as Salesforce, ServiceNow, or RESTful microservices, with retries, idempotency keys, and circuit breakers for resilience.

This orchestration layer turns reasoning into actionable workflows, bridging foundation model cognition with enterprise execution

4. Memory & Recall

Agents need persistence to move beyond stateless interactions. Mosaic AI supports tiered memory architectures that combine ephemeral context with durable recall.

- Short-Term Memory: Buffers intermediate thoughts, tool outputs, and conversation context within a single task execution. Often implemented as MLflow spans or workflow-local caches.

- Long-Term Memory: Persist episodic recall—such as past incidents, user preferences, or business KPIs—in Delta Lake tables or vector databases.

- Embedding Memories: Convert structured logs (e.g., system metrics, ticket updates) into embeddings, allowing semantic retrieval in future sessions.

- Context Injection: Memory states are retrieved via Vector Search and injected into prompts at runtime, ensuring continuity of reasoning across days, weeks, or months.

This layered approach enables agents to act not only in the moment but with awareness of history, preferences, and enterprise context — essential for scalable enterprise AI agents.

5. Monitoring, Governance & Agent Ops

Without observability and safety, agentic AI remains a black box. Mosaic AI builds in AgentOps—monitoring, evaluation, and governance features that operationalize agents.

- Prompt Tracing: Log every input/output pair, intermediate reasoning step, and tool invocation with MLflow Tracing or Unity Catalog event streams.

- Toxicity & PII Filters: Integrate moderation layers, either through wrapper models or policy-driven filters, to detect and redact sensitive or unsafe content.

- Cost & Token Tracking: Monitor inference costs, latency distributions, and token usage per agent, per task, per tenant. Audit logs are fed into Delta Live Tables for reporting.

- Observability Dashboards: Build structured dashboards showing task success rates, replan rates, tool error ratios, and SLA adherence.

- Evaluation Framework: Run golden-task benchmarks, regression suites, and human-in-the-loop reviews to validate performance before promoting changes to production.

With these controls, enterprises can shift from fragile demos to safe, auditable, and continuously improvable agent deployments — a core tenet of how to use Mosaic AI in Databricks effectively.

Modak Partnership as the Differentiator

The difference between proof-of-concept and enterprise transformation is execution. As a Databricks Preferred Partner, Modak is the trusted ally enterprises turn to — bringing years of architectural rigor and domain experience to translate Databricks’ powerful platform into a resilient, future-ready data strategy.

Avoiding Anti-Patterns

Enthusiasm often drives enterprises into quick POCs that collapse under familiar pitfalls. These anti-patterns are well-documented and avoiding them is essential to move from demo to deployment.

- RAG without freshness results in outdated answers that destroy user trust. Event-driven updates in Databricks ensure vector indexes stay current with the latest data.

- Orchestration without observability leads to black-box agents that no one can debug. MLflow and OpenTelemetry integrations turn every agent into an observable system.

- Opaque tool contracts allow hallucinations to trigger unintended actions. Schema-constrained APIs and idempotency keys prevent these failures.

Avoiding these pitfalls requires more than features—it requires disciplined execution. Databricks provides the tooling; Modak provides the architectural playbooks and governance mindset to prevent failures at scale.

Navigating the Trade-offs

Every enterprise architect faces the same design tensions when building agentic AI. These aren’t theoretical challenges, they are live trade-offs that directly impact cost, performance, and governance.

- Latency vs Accuracy: Real-time decisioning often demands speed, but accuracy may require deeper retrieval and reasoning. Databricks enables selective trade-offs by combining Delta Live Tables for low-latency streams with batch analytics for high-confidence insights.

- Cost vs Resilience: Redundancy ensures resilience, but at a cost. With autoscaling clusters and budget governance tools, Databricks allows architects to tune for the sweet spot—ensuring critical agents always run, while experimental workloads scale elastically.

- Model Scale vs Governance: Larger models can reason more richly, but governance must scale in tandem. Unity Catalog ensures that lineage, access, and auditability apply equally to embeddings, prompts, and models themselves.

The key is to treat these trade-offs not as accidents of engineering, but as explicit design decisions, continuously tuned and monitored. Databricks provides the substrate; Modak ensures those architectural contracts are honored in production.

Convergence Stories: Where the Real Value Emerges

The most transformative opportunities in agentic AI lie at the intersection of paradigms. Databricks’ unified Lakehouse provides the interoperable substrate, and Modak ensures enterprises can architect convergence use cases without silos or governance blind spots.

- AI × Data Mesh – Domain-owned, federated data products managed in Unity Catalog empower specialized agents for finance, HR, and operations. Modak helps enterprises implement mesh-aligned governance and embedding strategies, ensuring agents can access the right data with the right guardrails.

- AI × IoT – Edge telemetry streams into the Lakehouse via Delta Live Tables, where agents detect anomalies and trigger preventive maintenance in real time. Modak designs the streaming + agent orchestration patterns to handle both low-latency signal detection and deeper diagnostic workflows.

- AI × Security – Agents continuously analyze logs, enforce identity and access policies, and flag anomalies. With Unity Catalog, AI Gateway, and MLflow tracing, Databricks provides observability and governance. Modak enables enterprises to weave security policies directly into orchestration and tool contracts, ensuring compliance isn’t bolted on but embedded in design.

These convergence stories are agentic AI shifts from incremental efficiency gains to enterprise-scale transformation. Databricks provides the stack; Modak ensures strategic, compliant, and production ready architecture.

Closing: Architecture Is the Strategy

The lesson from early adopters is clear: agentic AI fails when treated as a tool-first experiment. Success comes from architecture-first thinking—designing memory, governance, orchestration, and evaluation from the start.

Databricks Mosaic AI makes that possible. It turns the vision of autonomous, tool-using agents into scalable, secure, and composable enterprise systems. By grounding innovation in architecture rather than tools, enterprises can move beyond chatbots, build trust in AI, and unlock the full potential of autonomous intelligence.

The future of AI in the enterprise is agentic. And the enterprises that thrive will be those that engineer their way into it, not stumble into it. Let’s discuss. Click here for discovery call.