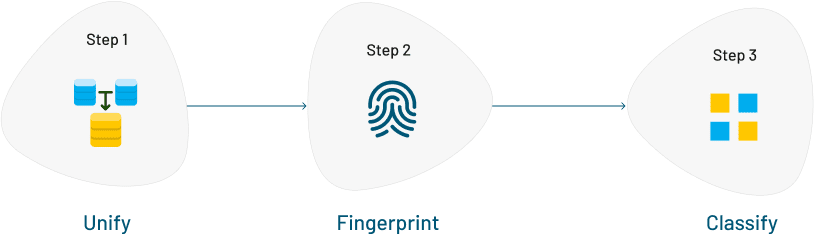

Traditional approach to

Data Unification

Data Unification involves the process of ingesting, transforming, mapping, and deduplicating, and exporting the data from multiple data sources. Two software tools are commonly used by IT teams when dealing with transactional data sets to feed into data warehouses: ETL (Extract Transform and Load) software and MDM (Master Data Management) software.

The Challenge

The problem of unifying 3 different data standards with 10 records each doesn’t require a tool. Instead, the user can utilize a whiteboard and a pen to solve the issue. When it comes to five different data standards with 1 lakh rows, the traditional ETL approach can be used. But, if the problem is to solve tens or hundreds of separate data sources with 5000+ mapping rules, 3000+ variations in column names, and billions of records in each source, then the traditional ETL solution is not feasible.